March 1, 2026

The Commoditisation of AI

Why OpenAI risks losing when value shifts from models to platforms

OpenAI are in trouble

And no — it’s not just because of circular deals and 40x revenue multiples, although that part matters. [1]

The real reason is more fundamental. LLMs are becoming a commodity.

Models are getting better. But more importantly, they are getting cheaper, more similar and increasingly interchangeable. That’s a dangerous combination. Because if intelligence becomes cheap and interchangeable, then the value in AI is not captured by providing the model. It is captured somewhere else.

The real battle is not about building the smartest model. It is about owning where that intelligence is used — the interface, the workflow, the entry point. And that is exactly where OpenAI is vulnerable, while companies like Google, Microsoft, Meta and Apple already control the surfaces where users spend their time.

Unless OpenAI can own the interface where AI actually lives — not just a chat window — they risk being reduced to something much less powerful: an API. And that is a dangerous place to be in a world where the platform owners set the rules.

That is why OpenAI is making bold bets — including a potential move into AI-first hardware — to try and redefine where that interface lives.

Because APIs don’t win markets. Platforms do.

LLMs are becoming commodities

To understand why OpenAI is in trouble, we need to look at the underlying economics of LLMs. And those economics are starting to look a lot like a commodity market.

Performance is converging. Across benchmarks, the leading models are all hitting similar levels. In many cases, they are already near the ceiling of what the tests can measure.

Recent benchmarks show that the top models — GPT-5, Claude Opus and Gemini — are often separated by only a few percentage points across reasoning, coding and math tasks. [2]

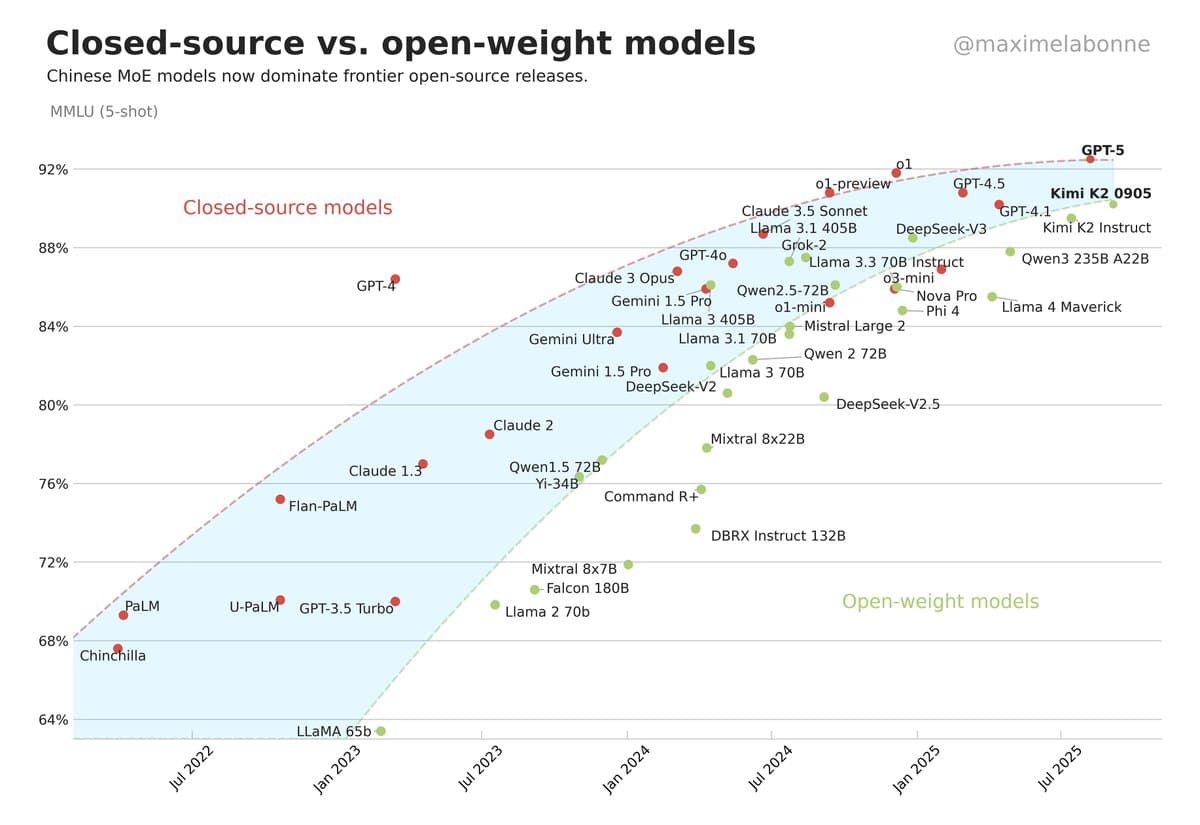

[3] Benchmarks such as MMLU illustrate how LLM performance has converged over time. While MMLU is used less today as models have saturated it, it still highlights how tightly clustered frontier models have become on measures of general intelligence.

More importantly, in real-world usage, the differences are getting harder to notice. For most normal use cases — questions, writing, coding — the average user cannot meaningfully distinguish between models. They are all just… very good.

That matters. Because once performance differences become marginal, many users stop choosing based on quality. They start choosing based on everything else.

Then there is the next piece — and arguably the most important one.

For most companies, changing model provider is nothing more than an API rewrite. There is no migration. No real lock-in. No deep dependency. Increasingly, companies design their systems to be explicitly model-agnostic, making it even easier to swap between providers.

Switching.

The same is true for end users. Switching between ChatGPT, Gemini, Claude or any other chatbot is trivial. It is another tab, another app, another prompt. There is no real cost to trying something else, and very little reason not to. Most users do not build habits around a specific model — they just use whatever is available, convenient or already integrated into the tools they are using.

No migration. No lock-in. No real friction.

This has a very simple consequence. If models are similar, and switching between them is easy, then competition shifts to price.

Prices for LLMs are not collapsing in a simple way. Frontier models are still expensive. But something more interesting is happening. Prices are converging.

Across OpenAI, Google, Anthropic and others, frontier models now sit in a remarkably similar range. GPT-5, Gemini Pro and similar models all cost roughly $1–$2 per million input tokens and around $10 per million output tokens. More advanced models cluster slightly higher, but the pattern is clear: competition is compressing prices into the same band. [4]

That is not what a differentiated market looks like. That is what a commodity market looks like.

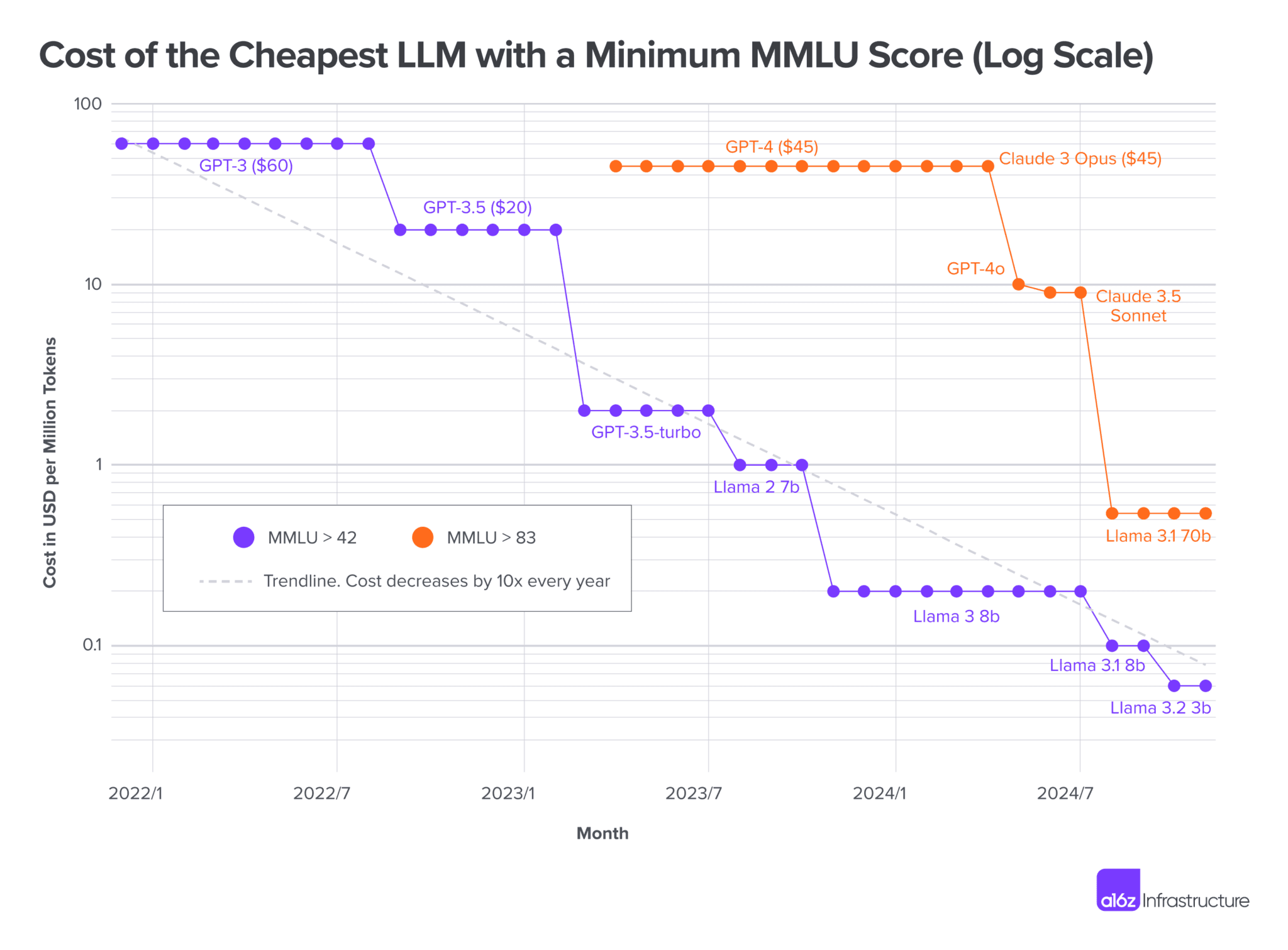

But that is not the most important trend. What really matters is that the cost of achieving a given level of intelligence is collapsing. [5]

[5] The chart shows the cost of the cheapest model reaching a given capability level (measured by MMLU). Over time, equivalent levels of intelligence have become significantly cheaper, often by an order of magnitude or more

The graph shows that the cost of achieving a given level of intelligence is collapsing. What required a frontier model a year ago can now be achieved with much cheaper models. In many cases, the same level of performance is 10–100x cheaper within a year.

At the same time, an entirely new price tier has emerged. Models like Gemini Flash, GPT-nano and other “mini” variants operate at around $0.1–$0.6 per million tokens, while frontier models still sit closer to $10–$20. [4]

Chinese models are pushing this even further. DeepSeek models offer competitive capabilities at roughly $0.3–$1 per million tokens, undercutting many Western models by an order of magnitude.

Put differently, there is now a 10–100x price gap between frontier AI and “good enough” AI.

And as soon as “good enough” is enough, price becomes the deciding factor.

The economics push in the same direction. Training a frontier model costs tens or even hundreds of millions of dollars. It is a massive upfront investment. [6]

But once the model exists, the cost of serving one more request is relatively low — and falling. That creates a powerful dynamic. Low and falling marginal cost in a competitive market always leads to price pressure.

Put those pieces together:

- Models are getting cheaper

- Models are getting similar

- Switching between them is easy

That is the textbook definition of a commodity market.

Commodity markets do not reward producers with high margins. They reward scale, efficiency — and often, someone else entirely.

Which brings us to the uncomfortable reality. Everyone is investing billions into building these models. But if current trends continue, they may end up producing something that looks less like proprietary software — and more like infrastructure.

Digital steel. Critical. Ubiquitous. Necessary for everything.

But not exactly where the profits to support Silicon Valley lifestyles are made.

From models to platforms

If models are becoming commodities, then the value does not disappear. It moves.

And in AI, it moves to a very specific place: the interface, the workflow, the entry point — the place where the user actually interacts with the system.

Models are interchangeable. Workflows are not.

That is good news for companies that already own the workflows. And it is a problem for companies that only provide the models.

Think about it like this. It is easy to switch between ChatGPT and Gemini. It is just another tab, another prompt, another API call. But try switching the system you use to actually do your work.

A lawyer does not casually switch from one legal platform to another. Moving from something like Legora to Harvey is not just changing a tool — it is changing workflows, documents, habits, and processes.

The same is true for a consultant working inside Microsoft 365. When Copilot is integrated into your email, your documents, your spreadsheets, and your meetings, you are not just using AI — you are working inside it. At that point, switching is no longer trivial. It is painful.

That is the difference. Models are interchangeable. Workflows are not.

This is where moats are built. Not in the model itself, but in how that model is embedded into real work.

Platforms create lock-in because they sit at the center of repeated usage. They accumulate context. They integrate into other tools. Over time, they become the default way of doing things. That is incredibly hard to replace — and that is where the value will be captured.

You can already see this playing out.

Google is embedding AI into search, Gmail, Docs, and Calendar. Microsoft is doing the same across the entire enterprise stack. Meta owns a different kind of surface: attention. Billions of people already spend hours inside its apps, and if AI becomes the way content is created and consumed, Meta already controls that entry point. Even Apple, often criticized for being behind in AI, owns the most important interface of all: the device in your pocket.

They do not need to win the model race. They just need to integrate AI into the workflows they already control.

This is why the real game is not intelligence. It is control of the entry point — the first place a user goes when they want something done. Search, documents, messages, code, social feeds, devices.

Control that, and you control the workflow. Control the workflow, and you control the user. And once you control the user, you have lock-in.

Users do not switch workflows for marginal improvements. No one leaves their entire setup because another model is 1% better. They stay where their data is, where their habits are, and where their work already lives.

That is where the moat is.

And OpenAI does not have that moat.

ChatGPT may still be the most popular way to interact with AI today. It has distribution, brand, and strong models. But it is still just an app. It lives inside a browser or on top of someone else’s operating system. It does not own the workflow. It does not own the platform. It does not control the entry point.

That creates a structural weakness.

Because as AI becomes embedded into Google, Microsoft, Meta, and Apple’s ecosystems, users will naturally use AI where they already are. In their search. In their documents. In their messages. In their feeds. In their devices. The standalone chatbot becomes less central — not because it is worse, but because it is not where the work happens.

And once users move into those workflows, they rarely come back.

OpenAI is competing for intelligence in a world where value is captured at the interface.

One API call away from irrelevance.

OpenAI’s platform problem

Value is shifting away from the models and into the platforms, the workflows, the entry points — and that is exactly where they are weakest. If AI ends up living inside Google, Microsoft, Meta, or Apple’s ecosystems, OpenAI does not control the user. It becomes a supplier. A backend. One model among many.

The risk is simple. If you do not control the interface, you do not control the user.

The problem is that it is very hard to win from the outside. ChatGPT is still an app, and apps live inside someone else’s platform. They can be replaced, integrated, or simply bypassed.

Software alone does not solve this. Even if OpenAI builds better tools, they are still operating within someone else’s ecosystem.

That is why moves like Atlas, OpenAI’s own browser, start to make sense. A browser is not just another product — it is a step closer to the user. A step closer to the entry point. [7]

But even that has limits. A browser still runs on top of someone else’s operating system. It does not fully solve the dependency.

So the question becomes: how do you actually control the entry point?

In 2025, OpenAI made a move that starts to make sense in this context. They acquired io, the startup founded by Jony Ive, the designer behind the iPhone, for 6.5 billion dollars — despite the company not even having released a product yet. [8]

No one knows exactly what they are building. But the direction is clear.

This is not about building another app. It is about building a new interface. Something that does not live inside a browser, or inside an existing operating system. Something that moves AI from something you open to something that is just there — integrated into how you interact with technology.

That is why the hardware bet matters.

Hardware is one of the few ways to control the default interaction. It is where the user starts. It is how Apple built its ecosystem. It is how the interface becomes the platform.

Software can get you closer to the user. Hardware can put you in front of them.

In that context, this starts to look less like a side project — and more like an attempt to redefine where AI actually lives.

OpenAI are chasing the game in the 90th minute. They can see the field shifting, and they know incremental improvements will not be enough. So they are making a bet that goes beyond better models or better apps.

They are betting that AI will not just be integrated into existing platforms.

They are betting that AI becomes the platform.

They need AI to go from being the add-on to being the operating system.

And they are betting that Jony Ive can help them build it.

Two Futures for AI

There are two possible futures for AI.

In the first, AI is absorbed into existing platforms. It lives inside Google, Microsoft, Meta, Apple, and a growing layer of vertical tools like Cursor or Legora. It becomes embedded in the workflows people already use, integrated so deeply that it fades into the background. The interface does not change. The winners are the ones who already control where work happens.

In that world, OpenAI loses.

Not in a dramatic collapse, but in a slow, structural way. It becomes infrastructure — one model among many, competing on price and performance in an increasingly commoditized market. The leverage shifts to the platforms, and OpenAI is left supplying intelligence into systems it does not control. Eventually, it is acquired. Not at today’s valuations, but at a fraction of them. A necessary piece of the stack, but no longer the center of it.

And importantly — this is the default path.

In the second future, something very different happens.

AI does not just integrate into existing platforms. It becomes the interface — the entry point, the place where users go to get things done. It might be a device, or something else entirely. That part is still unclear. What matters is that it becomes the primary way millions of people interact with technology, sitting above existing apps, workflows, and systems.

In that world, whoever controls that interface wins. That could be OpenAI, but it does not have to be. If OpenAI manages to build it — if it becomes the entry point — then it wins. Not because it has the best model, but because it owns the interface, the workflow, and the user. Instead of supplying intelligence to someone else’s platform, it becomes the platform itself, capturing the ecosystem and the value that comes with it. But if someone else gets there first, the outcome looks very different.

That future does not happen by accident. It requires a break from the existing platforms — a new default, a new way to interact with technology. It requires something that is not just better, but fundamentally different.

It requires a miracle.

It requires Jony Ive to do what he has done before: to redefine how people interact with technology, and to take AI from something you open to something that is simply there. Not an app, but an interface. Not a feature, but the entry point.

Not an incremental improvement.

A shift.

Conclusion: The real AI race

The AI race is not about intelligence. As models become cheaper, better, and increasingly interchangeable, the value shifts to the interface, the workflow, and the entry point — the place where users actually interact with AI and where real lock-in is created. Without that, even the most powerful model is just another provider competing on price.

That is the position OpenAI finds itself in. It has the models and the momentum, but it does not control where the work happens. The gap between the possible outcomes is enormous: in one future, OpenAI becomes infrastructure; in the other, it becomes the platform. The difference is not intelligence — it is where AI actually lives.

Without user lock-in, every AI company is just one API call away from irrelevance — and OpenAI knows it. Whether it becomes the platform or just another provider now depends on one thing: who wins the entry point — and whether Jony Ive can deliver the miracle.

Sources

[1] Datamation — OpenAI Valuation Reaches $500B

https://www.datamation.com/artificial-intelligence/openai-500b-valuation

[2] LM Council — LLM Benchmark Comparisons

https://lmcouncil.ai/benchmarks

[3] Maxime Labonne — Closed vs Open-weight Model Performance (MMLU)

https://x.com/maximelabonne/status/1972615048511250647

[4] PricePerToken — Live LLM Pricing Comparison

https://pricepertoken.com

[5] Andreessen Horowitz (a16z) — LLMflation: The Cost of Intelligence is Falling

https://a16z.com/llmflation-llm-inference-cost

[6] AboutChromebooks — AI Model Training Cost Statistics

https://www.aboutchromebooks.com/machine-learning-model-training-cost-statistics

[7] OpenAI — Introducing ChatGPT Atlas

https://openai.com/index/introducing-chatgpt-atlas

[8] Reuters — OpenAI to Acquire Jony Ive’s Startup io

https://www.reuters.com/business/openai-acquire-jony-ives-hardware-startup-io-products-2025-05-21